Alexa Skill Prototyping

As a Head of Prototyping when I first started my research of Chatbots and Voice User Interfaces (VUI) I came across several methodologies on how to create Alexa Skills. Designing a product for voice-driven devices – like Amazon Alexa or Google Home (and now eventually Apple Homepod) – brings new challenges. Over the years we have built this enormous toolkit of how one would approach product development: scribbles, user stories, lo-fi prototypes, hi-fi visual designs etc… But all of it really useless when your all you’re facing is the “lady in the tube”.

Voice User Interface

In BBC R&D we’ve been running a project called Talking with Machines which aims to understand how to design and build software and experiences for voice-driven devices…

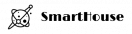

The Process

I’ll try to cover the essence of each step – you are welcome to read the longer version on the BBC Blog itself.

Scenario Mapping

The main reason for visual mapping is to answer two simple question: “Why and How would our Skill be used“?

For example, if you think about social context you should consider that the Skill use case is totally different from the mobile app use case. The app captures your individual attention, whereas Amazon Alexa is usually placed in your living room – and is used in a broader, social context. Meaning: everyone in a room can interact with your Skill.

Once several “ingredients” of the future scenarios are ready – it’s easier to get different and new ideas – about what your Skill will actually be doing, how it will be used, when, by whom and why.

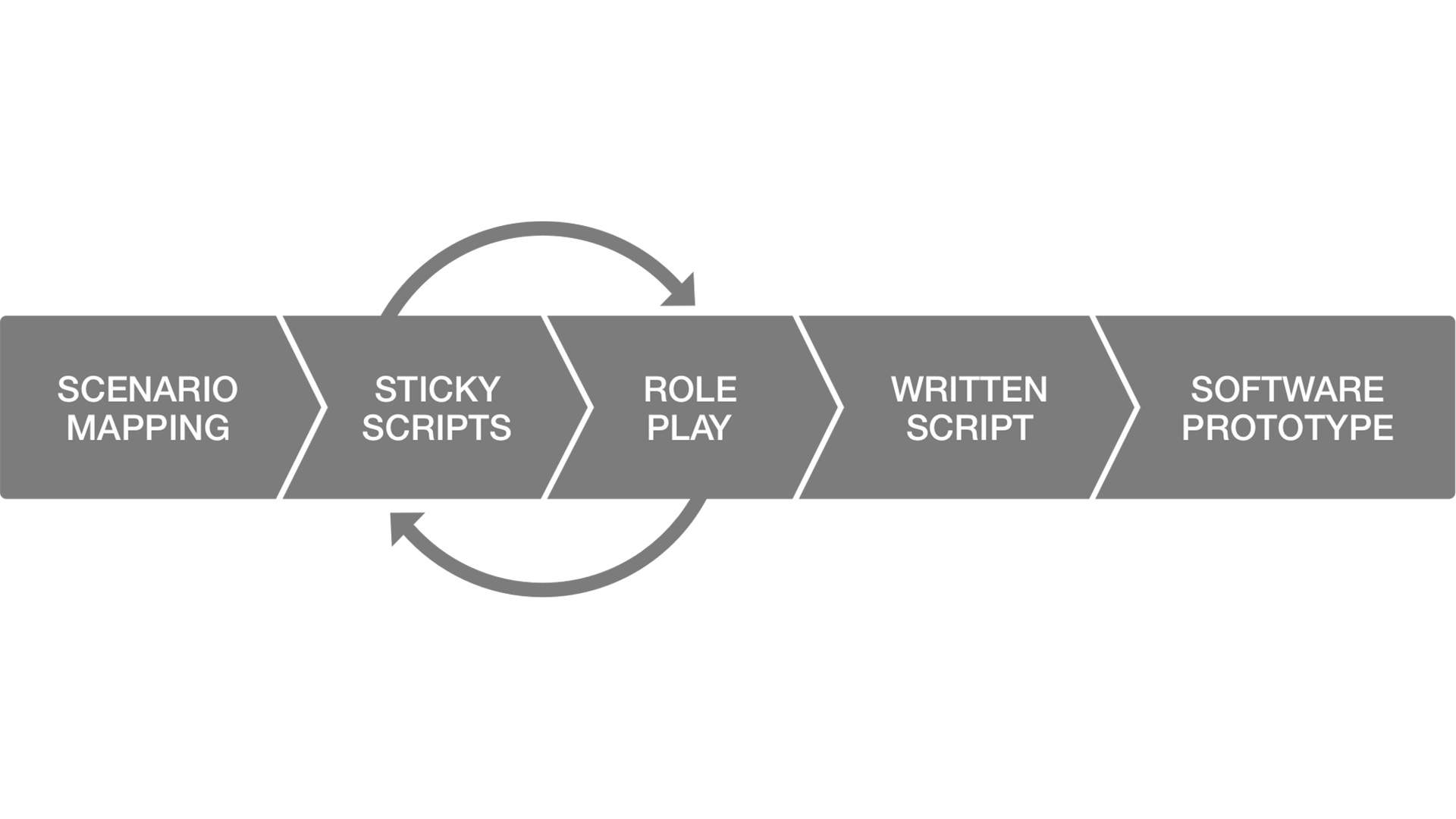

Sticky Scripts

The second step of the voice interface prototyping is creating an actually script using sticky notes. It helps visualize the decision tree and will be useful for the future role playing. Color coding also helps to identify individual actions: like information being processed, external API being queried, etc.

Role Play

One of the most important steps of the process is role playing.

BBC actually managed to get a different human to “play” every individual action:

* System voice

* Triggered sound effects

* Quick props

* Machine Roles: making queries against data sources

* User (who doesn’t get to see the script

This one was extraordinary useful when I started developing our first Skills. Something magical happens, when you recruit someone who is not familiar with the script you’ve so laboriously created. You can see first hand the problems, pauses, misunderstandings and dead ends in your script.

Written Script

This is something that we by SmartHouse are doing differently. We create Mind Maps, not the full written scripts. It’s much easier for other people to then understand the scenario flows.

What we learned from BBC though is running the script trough machines. There are things that look good on the screen, but totally change when said out loud.

One of the tools we use is Sayspring – it helps us actually get the glimpse of what our Skill would sound like – before writing a single line of code.

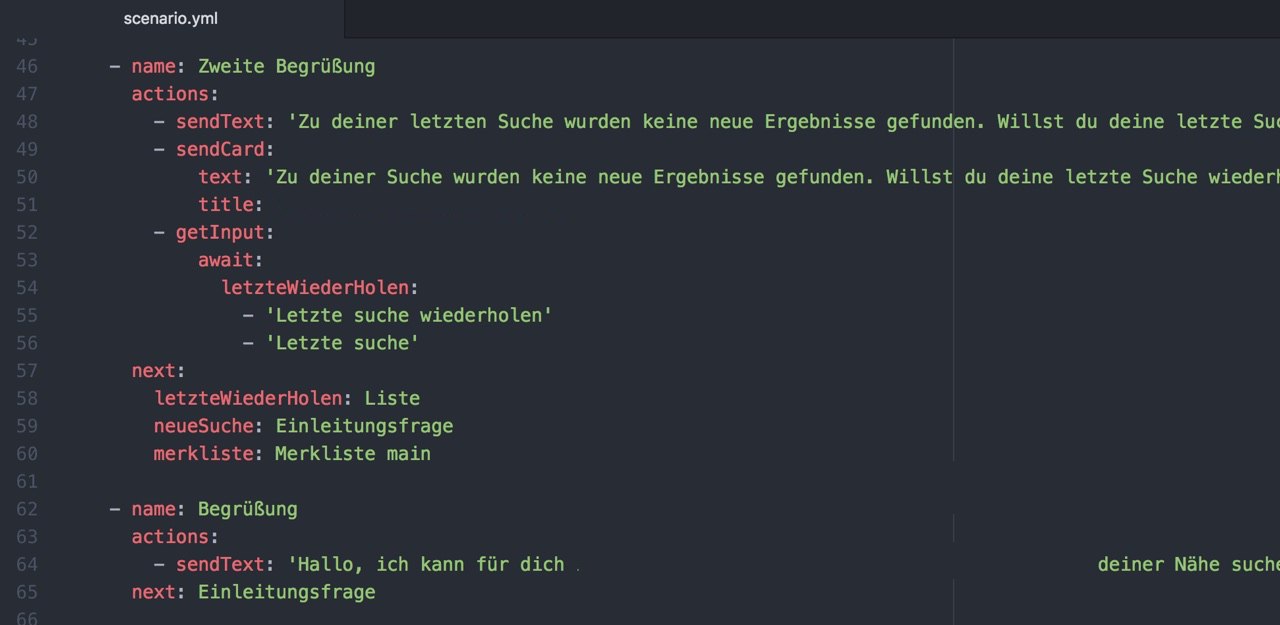

Software Prototypes

BBC suggests using the platforms your’ve building your skill for to build actual software prototypes:

“Another benefit of building a prototype on the target platform itself is that it’s possible to iterate from a prototype to a releasable product – as long as you’re careful to refactor and tidy up as you go”.

We found it’s quite hard to do. That’s why we created our own solution – putting down scripts in simple markup language – much like you would with MarkDown. But that is again the topic for another post…

Thanks

Once again – thank you BBC R&D for putting this amazing methodology out there for everyone to learn from.

What are you using while creating your Chatbots and Skills? Are there any tricks you would like to share with us? Drop us a line down below in comments!